A new letter to the Federal Trade Commission, and US attorneys general for all 50 states and the District of Columbia, calls for an urgent investigation into Elon Musk’s Grok — especially its new “Imagine” tool for AI-generated image and video.

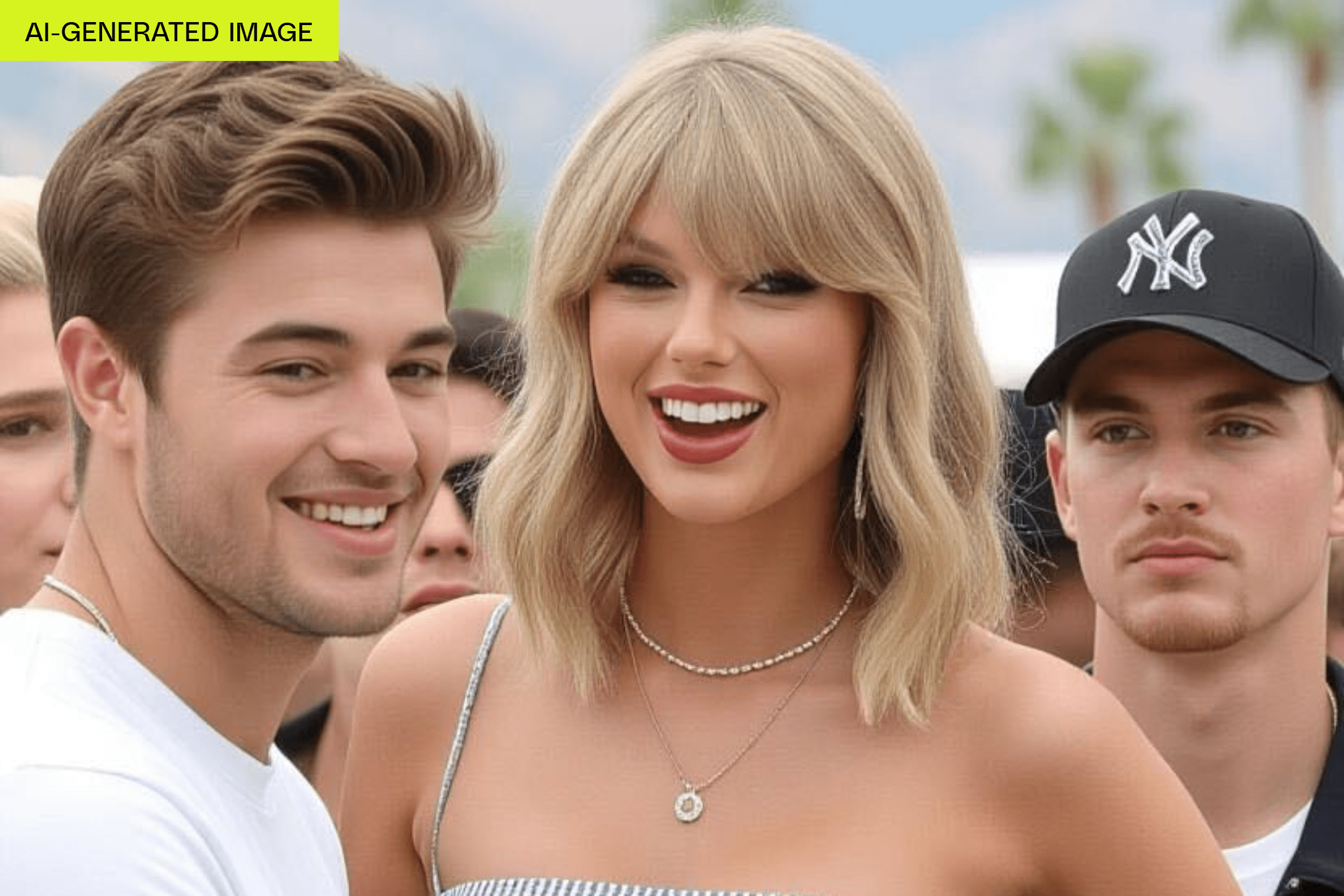

The tool, released by xAI earlier this month, encourages users to create NSFW content via a “Spicy” mode, and the first time The Verge tested it, the tool created topless deepfake videos of Taylor Swift, even without being asked to do so.

Today’s letter was spearheaded by the Consumer Federation of America (CFA) and signed by 14 other consumer protection organizations, including the Tech Oversight Project, the Center for Economic Justice, and the Electronic Privacy Information Center (EPIC). The letter directly references The Verge’s reporting on the Grok-generated celebrity deepfakes and goes on to say that although the platform doesn’t currently offer “Spicy” mode for real photos uploaded by users — which would be an even more concerning option for revenge porn and other violating practices — it “still generates nude videos from images generated by the tool, which can be used to create images that look like real, specific people. The generation of such videos can have harmful consequences for those depicted and for under-aged users.”

The letter goes on to state that if the limitation of the “Spicy” mode for user-uploaded photos is ever removed, it “would unleash a torrent of obviously nonconsensual deepfakes. In fact, the platform and its chief executive have a penchant for removing moderation safeguards under the guise of ‘free speech.’”